Why 70% of Agentic AI Pilots Fail — And How Mid-Market Leaders Can Actually Scale

Why most AI experiments stall — and what it takes to turn pilots into scalable, ROI-driven enterprise systems.

Most Agentic AI pilots fail not because of the technology, but due to weak governance, lack of human oversight, and overengineered experimentation that never translates into measurable ROI. For mid-market firms, success depends on balancing automation with compliance, operational discipline, and production-ready architecture. The real shift is moving from isolated Agentic AI pilots to scalable systems designed for business impact.

00

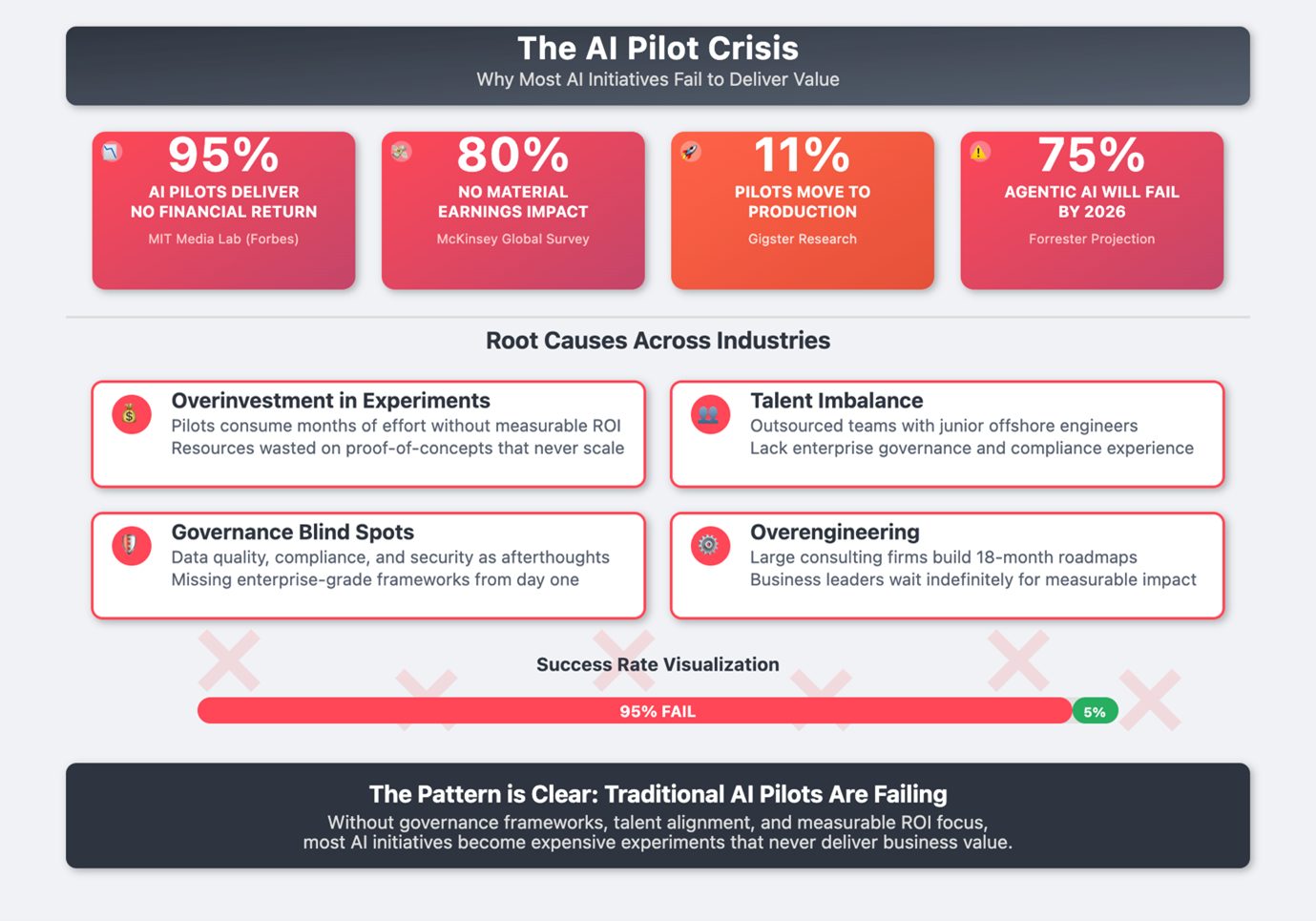

The Harsh Reality of Agentic AI Pilots

Over the last two years, Agentic AI pilots have moved from experimental initiatives to boardroom priorities. Yet despite rising investment and executive attention, most organizations struggle to scale these pilots into production systems that deliver measurable outcomes. The challenge isn’t the promise of autonomous workflows — it’s designing Agentic AI pilots with governance, integration, and business value in mind from the start.

Research consistently shows the same pattern:

95% of AI pilots deliver no measurable financial return (MIT Media Lab / Forbes)

80% of firms see no earnings impact despite adoption (McKinsey & Company).

Only 11% of pilots reach production (Gigster)

75% of agentic initiatives may fail without governance frameworks (Forrester)

The technology isn’t failing.

The operating model is.

Most organizations treat pilots as innovation exercises rather than production systems. They optimize for experimentation speed instead of economic discipline, governance readiness, or integration depth.

The result is predictable: pilots generate demos, not durable business outcomes.

00

Why Pilots Collapse Before They Scale

Across industries, the failure pattern looks nearly identical.

1. Experimentation Without Economic Guardrails

Pilots appear inexpensive at low volume. But once scaled, token usage expands, orchestration layers multiply, and data pipelines duplicate. Costs rise before value compounds.

2. Talent Without Governance Depth

Outsourced build teams often lack experience in enterprise controls, compliance architecture, or workflow accountability. The system runs — but it cannot pass audits or scale safely.

3. Governance Treated as a Phase, Not a Foundation

Security, lineage, and compliance are bolted on after experimentation. At that point, redesign is unavoidable.

4. Overengineering Instead of Outcome Engineering

Large consulting roadmaps stretch 18 months while business teams wait for measurable ROI.

As noted by TechRadar, many GenAI pilots today fail not due to models, but due to execution and architecture gaps.

The real problem isn’t model performance.

It’s production readiness.

00

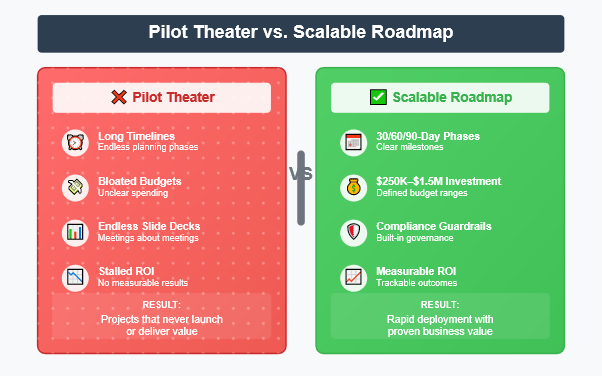

The Mid-Market Constraint: No Room for AI Theater

For mid-market firms ($10M–$100M revenue), the economics are unforgiving.

Unlike Fortune 500 enterprises, they cannot absorb multi-year experimentation cycles. Every AI investment must show value quickly and predictably.

Successful implementations share three traits:

Enterprise-grade governance from day one

Unit economics aligned with business outcomes

AI orchestration balanced with human oversight

Typical budgets range between $250K and $1.5M over ~90 days — dramatically leaner than Big Four programs that exceed $10M and stretch for years.

For mid-market leaders, speed is important.

But predictability is essential.

00

Industry-Specific Constraints that Shape AI Success

Agentic AI doesn’t scale uniformly across industries. Regulatory structures determine how fast pilots can mature.

Finance: Compliance Must Match Velocity

SOX and SEC oversight require audit trails for every automated decision.

Without traceability, speed becomes liability.

Healthcare: Safety Overrides Automation

HIPAA requirements mean anonymization pipelines and human review layers must precede AI deployment.

Manufacturing: Safety Certification Drives Timeline

OSHA and ISO standards require override mechanisms and operational accountability before autonomous workflows are allowed.

Across sectors, the lesson is consistent:

AI architecture must be designed for compliance, not retrofitted to it.

00

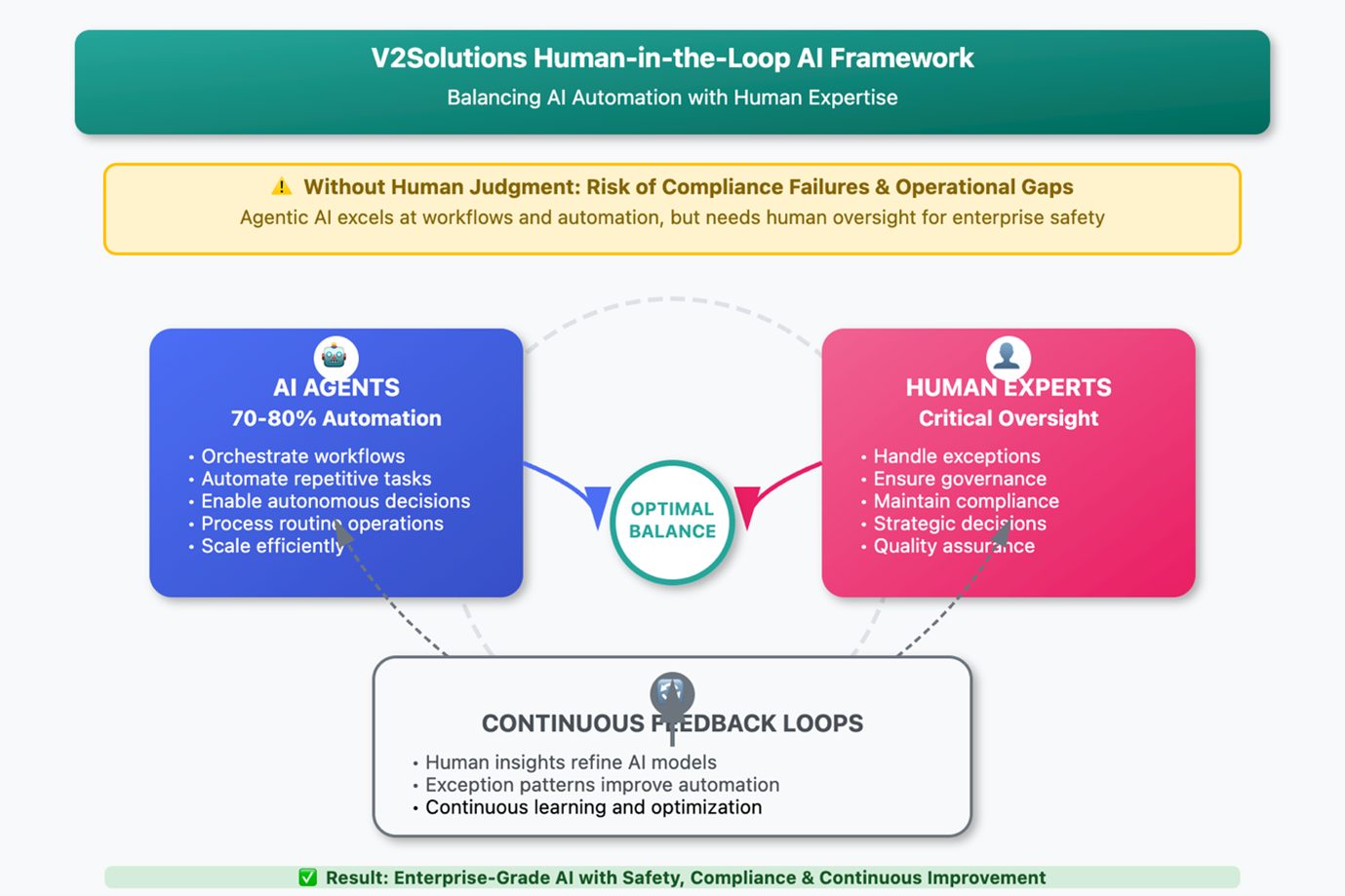

The Human + AI Balance That Actually Works

Agentic AI excels at workflow orchestration, repetitive processing, and decision routing.

But without structured human checkpoints, autonomous systems create governance risk and operational fragility.

A scalable model distributes responsibility intentionally:

AI agents automate 70–80% of routine workflows

Human experts manage exceptions, policy, and oversight

Feedback loops continuously refine system behavior

This balance converts automation from experiment to infrastructure.

00

Case Example: Scaling Outcomes, Not Experiments

A Fortune 500 mortgage lender faced 45-day loan cycles and operational backlog.

By deploying agentic workflows with embedded human governance:

Loan processing time dropped 67% in 10 weeks

Customer satisfaction rose sharply

Quarterly revenue expanded through faster approvals

The breakthrough came not from model upgrades, but from governance-first orchestration.

Implementation Framework: From Agentic AI Pilot to Production

Scaling Agentic AI requires operational discipline.

Governance Foundations

Define compliance requirements upfront (SOX, HIPAA, CCPA)

Establish cross-functional oversight councils

Deploy real-time monitoring dashboards

Conduct recurring compliance reviews

Vendor Selection Criteria

Proven orchestration experience in regulated environments

Compliance certifications (SOC2, ISO 27001)

Human-in-the-loop architecture support

Pricing aligned to scalable economics

Risk Mitigation Checklist

Data lineage tracked end-to-end

Human override points embedded

Stress-tested fail-safe workflows

Regulatory timelines mapped to rollout

When governance ships with the first production release, scaling becomes incremental rather than disruptive.

00

Why Agentic AI Pilots Fail Differently Than Traditional Software

According to Forrester, autonomous systems introduce unique failure modes:

Task ambiguity between agents

Goal misalignment across workflows

Inter-agent coordination errors

Emergent behavior from orchestration layers

Unlike traditional bugs, these failures arise from interaction complexity rather than code defects.

That makes architecture, guardrails, and monitoring far more important than raw model performance.

00

Competitive Positioning: Boutique vs. Big Four

Enterprise AI delivery models differ dramatically:

Big Four Firms

Expensive discovery cycles

Compliance heavy but slow

Long time-to-value

Offshore Vendors

Low upfront cost

High governance risk

Weak integration depth

Boutique Engineering Partners

Compliance-first design

Faster production timelines

Balanced cost and scalability

In practice, success depends less on vendor size and more on operational maturity.

00

Measurable Outcomes When Agentic AI Pilot Is Executed Well

When governance, economics, and orchestration align, Agentic AI delivers:

Weeks-level speed-to-value

Up to 40% lower implementation cost

Reduced compliance exposure

Faster cycle times and operational agility

These gains emerge when AI is treated as infrastructure — not experimentation.

00

Closing Thought: From Pilot Theater to Production Advantage

Agentic AI doesn’t fail because the models are weak.

It fails because organizations:

Optimize for experimentation instead of outcomes

Ignore governance until late stages

Separate automation from human accountability

The enterprises pulling ahead are doing the opposite.

They design AI systems for economics, compliance, and scale from the start.

The future of enterprise AI isn’t AI vs. humans.

It’s AI engineered to work with humans, within guardrails that make outcomes predictable.

That’s how pilots become platforms.

And platforms become competitive advantage.

Ready to move from AI pilots to real ROI?

Begin with a governance-first roadmap that embeds compliance, accountability, and measurable outcomes from day one—so your AI initiatives move beyond experimentation and evolve into secure, scalable production systems that consistently deliver real business value.

Author’s Profile

Dipal Patel

VP Marketing & Research, V2Solutions

Dipal Patel is a strategist and innovator at the intersection of AI, requirement engineering, and business growth. With two decades of global experience spanning product strategy, business analysis, and marketing leadership, he has pioneered agentic AI applications and custom GPT solutions that transform how businesses capture requirements and scale operations. Currently serving as VP of Marketing & Research at V2Solutions, Dipal specializes in blending competitive intelligence with automation to accelerate revenue growth. He is passionate about shaping the future of AI-enabled business practices and has also authored two fiction books.