Designing Production-Grade Agent Orchestration Frameworks

Architectural patterns and governance strategies for building reliable multi-agent AI systems at enterprise scale.

Most multi-agent AI systems perform well in demonstrations but struggle in production due to orchestration complexity. This blog explores how agent orchestration frameworks enable enterprises to build reliable multi-agent systems through structured architecture, governance guardrails, and resilient recovery mechanisms.

00

Most multi-agent AI demonstrations work perfectly—until they encounter production reality.

A planner agent generates a task list. Worker agents invoke tools. Another agent compiles the final response. Everything appears smooth during demos and conference presentations.

Then the system enters production.

A model hallucinates a tool argument.

Two agents call the same service simultaneously.

A prompt update unexpectedly breaks downstream workflows.

An API timeout cascades across the orchestration pipeline.

Suddenly the problem is no longer the model.

It is the orchestration layer.

For CIOs and technology leaders investing in enterprise AI, this distinction is critical. The real challenge in scaling AI systems is rarely model intelligence—it is the reliability of the systems coordinating those models. This is where agent orchestration frameworks become essential.

Across numerous enterprise AI deployments, a consistent pattern has emerged: AI models rarely fail first—the surrounding systems do. Organizations implementing multi-agent workflows quickly discover that building reliable agent orchestration frameworks requires the same architectural discipline used in distributed systems and platform engineering.

Production AI systems demand structured coordination, deterministic guardrails, and resilient recovery mechanisms.

00

The Enterprise Gap Between AI Demos and Production Systems

Most modern AI agent frameworks focus heavily on capability—reasoning chains, tool usage, and autonomous task planning.

Enterprise systems require something different: control.

A production-ready platform must manage multiple layers simultaneously, including multi-agent coordination, tool reliability, execution state tracking, prompt lifecycle updates, and failure recovery. Without strong orchestration governance, agents behave much like distributed microservices deployed without monitoring.

Organizations quickly realize that the intelligence of the model is only one component of a reliable AI system. The architecture governing those models determines whether the system operates consistently at scale.

This is why agent orchestration frameworks are emerging as the foundational layer of enterprise AI platforms.

00

Core Architecture Patterns in Agent Orchestration Frameworks

When enterprises begin designing agent orchestration frameworks, they typically encounter two primary orchestration approaches: deterministic chains and planner-driven workflows.

Both architectures play an important role in production environments.

Chain-Based Orchestration

Chain-based orchestration executes a fixed sequence of steps. A system might retrieve documents, summarize them, generate a response, and then validate the output before returning results.

Because each stage is predefined, these workflows are predictable and easier to monitor. This makes chain-based orchestration particularly useful in environments where traceability and compliance matter.

These systems typically provide:

- deterministic execution workflows

- strong observability across each stage

- stable latency patterns

- easier compliance validation

For this reason, many early enterprise AI deployments relied heavily on chain-based workflows.

However, as organizations attempt to automate more complex tasks—such as financial research, analytics workflows, or development pipelines—rigid chains become limiting.

Planner-Based Orchestration

Planner-driven architectures introduce a reasoning layer capable of dynamically deciding how tasks should be executed.

Instead of following a fixed sequence, a planner agent breaks down the request into smaller tasks and determines which agents or tools should perform each action.

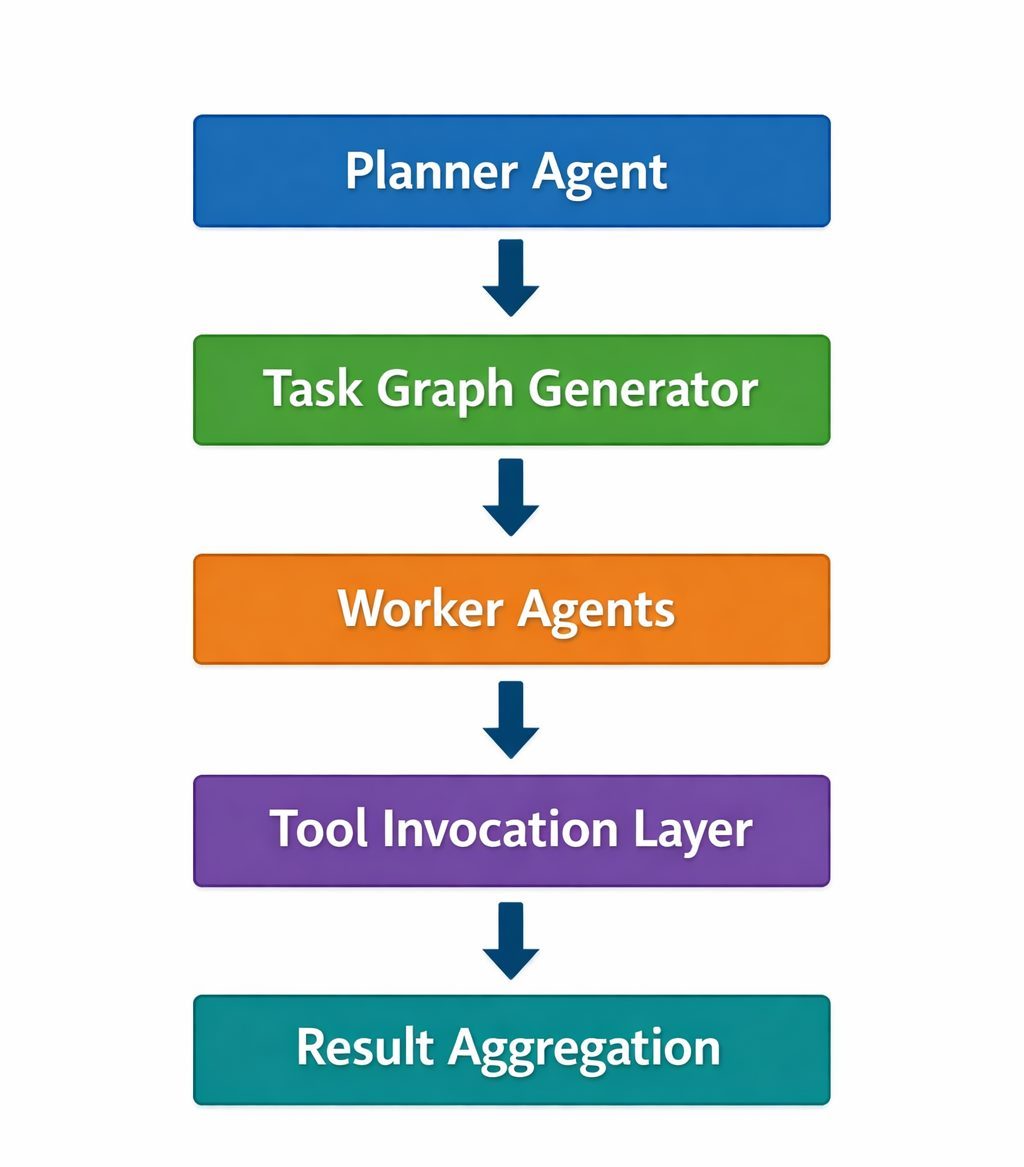

A typical structure within modern agent orchestration frameworks looks like this:

This architecture enables agent systems to solve open-ended problems and adapt to new inputs.

However, planner-driven systems introduce a new challenge: unpredictability. Two identical inputs may generate different execution paths. In production environments, this variability must be controlled.

Many enterprises therefore combine both architectures—using planner agents for reasoning while relying on deterministic pipelines for execution.

00

Supervisor Architectures for Scalable Agent Orchestration Frameworks

As multi-agent ecosystems grow, orchestration architectures typically evolve toward hierarchical coordination models.

One of the most effective patterns is the Supervisor–Worker architecture.

In this structure, a supervisor agent coordinates execution across multiple specialized worker agents. Rather than performing tasks itself, the supervisor delegates work, validates outputs, monitors execution states, and enforces operational policies.

Worker agents, in contrast, focus on narrow capabilities such as retrieval, analytics, summarization, coding tasks, or domain reasoning.

This design prevents agents from attempting tasks beyond their capabilities while improving reliability across the system.

Autonomous agents without supervision often behave like distributed systems without monitoring. Over time, unpredictable execution patterns emerge.

For this reason, many enterprise teams treat supervisors as control planes within their agent orchestration frameworks, ensuring structured governance across agent workflows.

00

Governance Guardrails in Production Agent Orchestration Frameworks

Reliable agent orchestration frameworks require governance mechanisms that prevent invalid tool calls and unpredictable system behavior.

Three guardrails are particularly important in production environments.

Tool contracts ensure that every tool invoked by an agent follows strict schema validation rules. If an agent generates invalid parameters, the system rejects the call rather than executing it.

Prompt versioning provides lifecycle control over prompt updates. Without versioning, small prompt changes can break entire workflows. Production systems therefore treat prompts like application code, introducing version control, environment configurations, and rollback capabilities.

Routing policies determine which agent processes each request. Large ecosystems may include dozens of specialized agents, and effective routing combines rule-based logic with intent classification models.

Together, these governance layers transform experimental AI workflows into reliable enterprise systems.

00

Designing Agent Orchestration Frameworks for Failure Recovery

Even the most sophisticated agent orchestration frameworks must assume that failures will occur.

Production environments regularly encounter tool timeouts, API outages, hallucinated parameters, or conflicting outputs between agents. Without structured recovery mechanisms, these failures can cascade across the system.

Resilient architectures incorporate fallback execution paths that activate when planner workflows fail. Deterministic pipelines can still retrieve relevant information and generate structured responses.

Tool layers also implement reliability mechanisms commonly used in distributed systems, such as retry logic, circuit breakers, and degraded service modes.

Finally, safe execution boundaries restrict agent autonomy. Tool access scopes, execution time limits, and approval layers ensure that agents cannot perform unsafe actions within enterprise environments.

00

Evaluating and Operating Agent Orchestration Frameworks at Scale

Building agent orchestration frameworks is only the beginning. Maintaining reliability requires continuous monitoring and evaluation.

Enterprise platforms track metrics such as task completion success rates, routing accuracy across agents, tool invocation reliability, response latency, token usage, and cost per task.

Evaluation pipelines typically include synthetic benchmark tasks, replay testing using historical datasets, human evaluation layers, and automated regression testing.

Some organizations even deploy evaluation agents that review the outputs of other agents, creating a feedback loop that improves reliability over time.

This operational layer transforms experimental AI workflows into sustainable enterprise platforms.

00

Agent Orchestration Frameworks Are Becoming the AI Platform Layer

The most important realization for organizations deploying agentic AI is simple.

Agent orchestration frameworks are not features. They are infrastructure.

Reliable enterprise systems combine multiple layers:

- planner reasoning engines

- deterministic execution pipelines

- supervisor control planes

- tool contract validation

- prompt governance

- failure recovery mechanisms

- continuous evaluation systems

Organizations that treat orchestration as infrastructure—not just a framework—are the ones that successfully scale AI systems.

Across hundreds of platform engineering initiatives, V2Solutions has consistently observed that durable AI deployments prioritize architecture, governance, and operational reliability over experimentation alone.

The future of enterprise AI will not be defined by better prompts.

Ready to move your multi-agent AI systems from experimentation to production reliability?

Explore how robust agent orchestration frameworks can enable scalable, secure, and well-governed AI systems across your enterprise.

00

Author’s Profile